Introduction

Sequoia Capital made a shocking declaration at the 2026 AI Ascent Conference: we are already in the AGI era. When an AI agent can recover from failure and persist until a task is completed, it constitutes general artificial intelligence from a commercial perspective. This article delves into three major characteristics of this cognitive revolution: a trillion-dollar service market, super-exponential development speed, and a qualitative shift from communication to computation.

Have you ever thought that we might already be living in the AGI era? Not as a scene from a science fiction novel, nor as a distant future, but right now. At the 2026 AI Ascent Conference, three partners from Sequoia Capital—Pat Grady, Sonya Huang, and Konstantine Buhler—directly announced: this is AGI. This declaration shocked me, not because they used the term, but because they provided an extremely pragmatic definition: if you can send an AI agent to complete a task, and it can recover from failure and persist until the job is done, then that is AGI. From a business, practical, and functional perspective, this is sufficient.

After listening to the entire presentation, I felt enlightened. For the past few years, we have been discussing how AI will change the world, but most people may still be at the level of “let’s improve efficiency by 10% to 40%.” Sequoia’s viewpoint is that the car has arrived. Not a faster horse, but a real automobile. This means not incremental improvements, but a fundamental transformation in how we work. Driving a car is completely different from riding a horse, and manufacturing cars is also fundamentally different from raising horses. What we are experiencing is a race of a different nature.

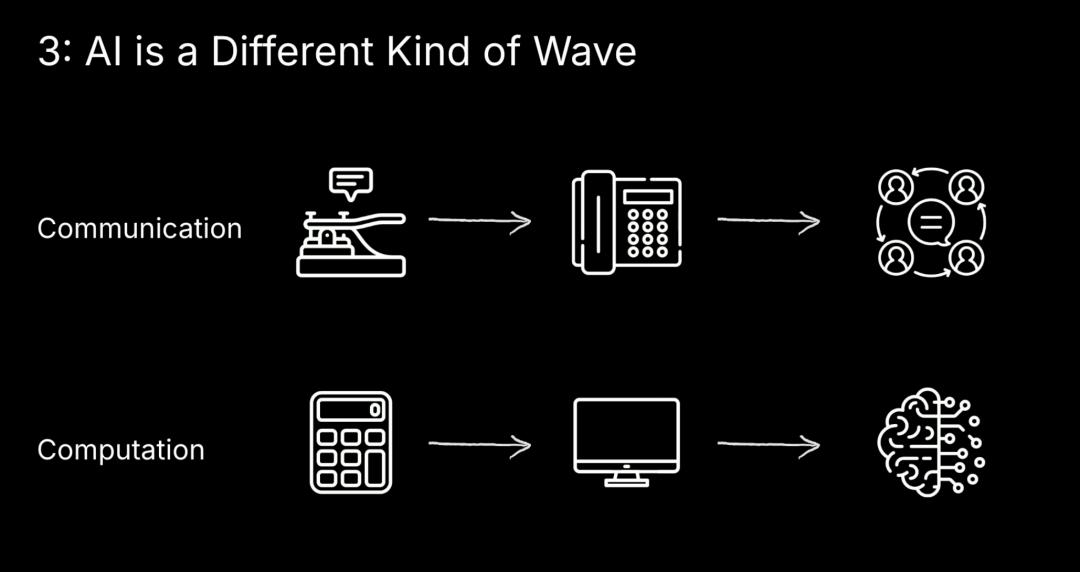

This is Not a Communication Revolution, But a Computation Revolution

Pat Grady presented an extremely important point during his speech: the AI revolution is different from all the technological revolutions we have experienced in the past. The internet, cloud computing, and mobile internet are all revolutions in communication, concerning how information is distributed. But AI is a revolution in computation, concerning how information is processed. This may sound like a semantic distinction, but in reality, these are two completely different waves.

I deeply understand the implications of this difference. The communication revolution is characterized by relatively stable infrastructure; when you build applications on top of it, the underlying systems do not change daily. But computation is different; the ground beneath your feet is always shifting. Every time a new capability arises, the technological foundation you build upon changes daily. In the past few years, we have experienced three significant turning points: the launch of ChatGPT in November 2022 showcased the power of pre-training; a few years later, the reasoning capabilities of the O1 model revealed a second scaling law during inference time compute; and recently, Claude Code, Opus 4.5, and 4.7 demonstrated the power of long horizon agents.

Pat is right that there is a hard break between the second and third turning points, marking a discontinuous change. The first two turning points made AI smarter, but the third turning point enables AI to truly get the job done. This is why Sequoia boldly claims, “this is AGI.” Even if you disagree that this is AGI, I believe we can all see that the car has arrived. In recent years, we have had many “faster horses”—applications that improve your efficiency by 10% or 40%—but have not fundamentally changed how you work. Now we are starting to see “cars”—applications that improve your efficiency by 10 times or 40 times, fundamentally altering your work methods, nature, and even organizational structure.

This transformation has a massive impact on me personally. I realize we can no longer think about AI using past mindsets. This is not a gradual change that can be adapted to slowly; it is a paradigm shift that requires immediate rethinking of everything. From product design and business models to organizational structures, everything needs to be reevaluated.

The True Breakthrough of Long Horizon Agents

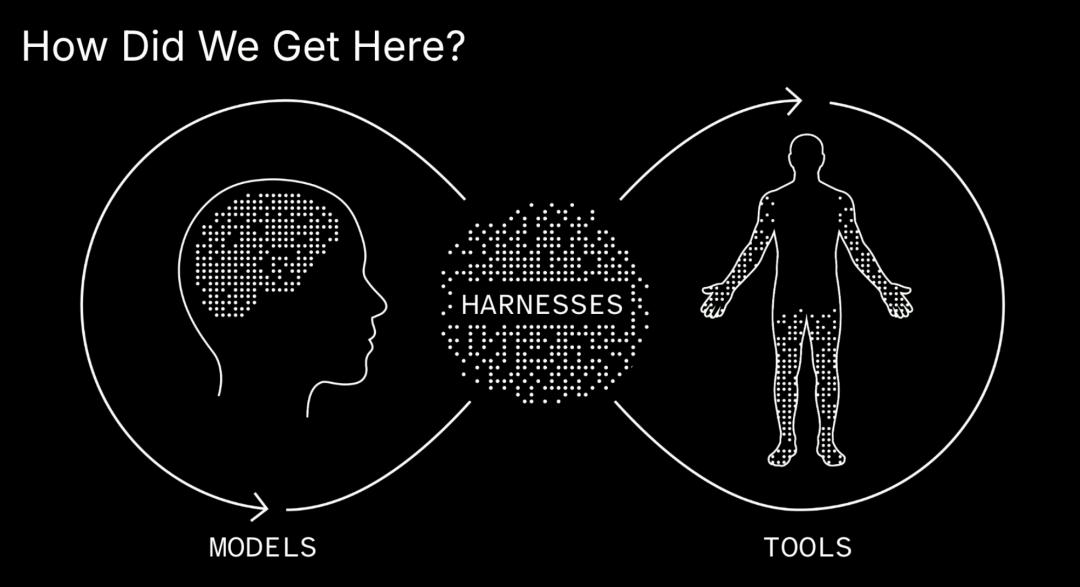

Sonya Huang discussed the evolution of agents during her presentation, and this history particularly illustrates the point. In 2022, projects like AutoGPT and Baby AGI became overnight sensations on GitHub. Their approach was to give GPT-3 some tools, wrapped in a loop, to run towards a goal. It sounded promising until you watched these agents fail over and over again. They were somewhat endearing but completely useless.

This example reminds us that we knew agents would arrive years ago, but the models were not ready then. Fast forward to today, and significant changes have indeed occurred. Suddenly, agents are everywhere, and they seem to work. Claude Code is a home run for the tech crowd, while OpenClaw (and all its lobster siblings) allows anyone with a phone to use agents. Whether you are a hardcore engineer or an average person, the point is that anyone can now create agents.

Sonya provided a precise definition of agents: an agent is a system that perceives its environment, selects actions, and autonomously moves towards a goal. More specifically, agents have three functional components. The first is the ability to reason and plan, which is the baseline level of intuition and immediate thinking. The second is the ability to take actions, including tools, searching, writing, compiling, etc. The third is the ability to iterate towards a goal, which allows agents to complete tasks over long time spans. Agency combines these three points, simply put, it is the ability to get things done.

I was particularly interested in a chart Sonya displayed called the “Meter chart,” which measures how long models can maintain performance on complex tasks without deviating from their path. A year ago, it was on the scale of tens of minutes; today, it is on the scale of several hours. This is the most significant progress. Models have finally become strong enough to maintain performance on long-term tasks. This is not a minor improvement but a qualitative leap from “unusable” to “usable.”

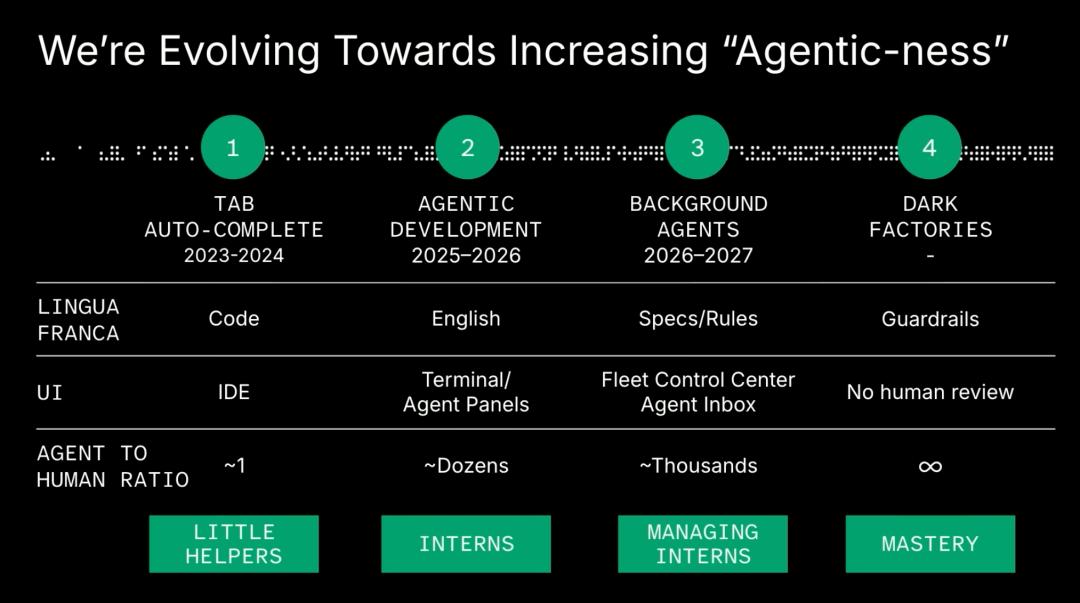

Now we see agents existing on a sliding scale of “agentness.” For example, in programming, in 2023 we had tab auto-completion, where an AI assists a human in a line. This is incrementally useful but not transformative. Now we have agentic development, where a human converses with an agent, instructing it on what to do, potentially managing a team of agents. But this paradigm is still being pushed further. We now see background agents, asynchronous agents, and agents generating sub-agents. Sonya believes that asynchronous agents may outnumber the current paradigm due to the leverage they provide in the system. At the forefront are what she calls “dark factories,” which completely remove human oversight from the system. This sounds crazy, but she mentioned seeing it in production environments, including cybersecurity companies. As long as there are sufficient safeguards and engineering, this is possible.

I feel both excited and uneasy about the concept of “dark factories.” The excitement stems from it representing the ultimate leap in productivity, while the unease arises from the fact that we are truly handing critical decisions over to AI. But I also realize that this may be an inevitable trend. Agents are evolving from small assistants doing minor tasks to interns that need to be managed, then to self-managing interns, and ultimately to interns that can be trusted to push to production without supervision. This evolution is happening not only in programming but across all applications of agents.

Why This Opportunity Is So Huge

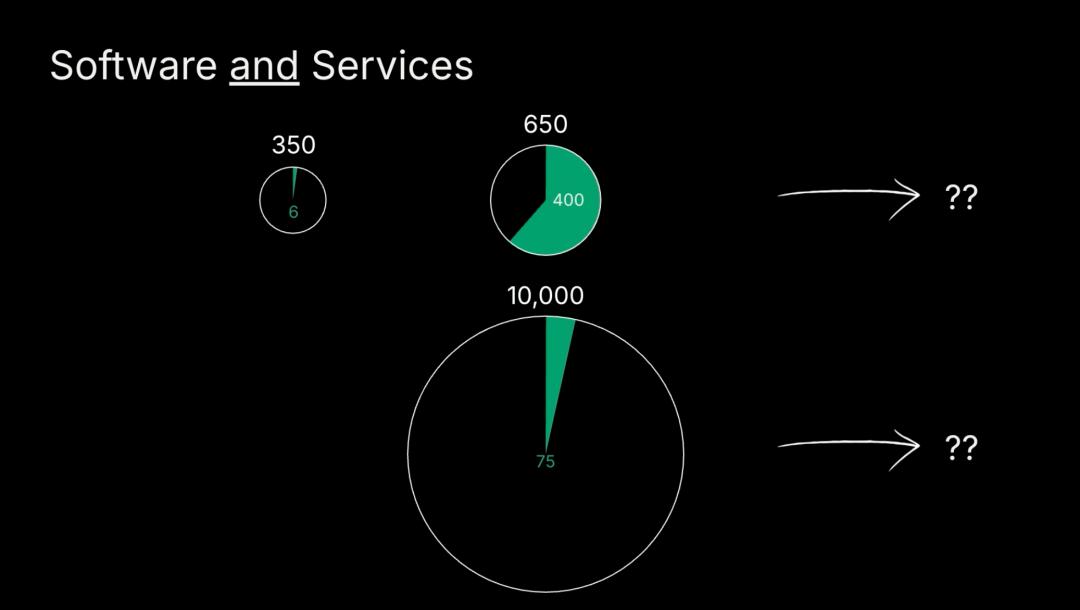

Pat emphasized three aspects of this AI wave’s uniqueness during his speech, each worth deep consideration. First, this is the largest wave to date. In the first 15 years of the cloud computing transition, the total addressable market (TAM) for software grew from about $350 billion to $650 billion, with cloud computing accounting for about $400 billion of that. But what is new is service revenue, which could be $10 trillion. Pat mentioned they do not know if it is exactly $10 trillion, $5 trillion, or $50 trillion, but they know that just the legal services market in the U.S. is a $400 billion market, which is equivalent to the entire software market size for just one vertical and one geographical location.

My understanding of this figure is that in the past, we were only optimizing software itself, but now we are replacing services. While the software market is large, the service market is much larger. When AI can truly perform the work of lawyers, doctors, analysts, and consultants, we are opening a completely different magnitude of market. This is not software eating the world, but AI eating the service industry. The profound significance of this shift is that we are no longer limited by software licensing and subscription business models but can charge directly based on results, just like hiring service providers.

This figure shocked me. We have always viewed software as a massive market, but now AI is opening up the service market, which is an order of magnitude larger opportunity. Sonya also emphasized this point in her speech: services are the new software. This is not just a slogan but a reality that is happening. In healthcare, you can hire an agent to check your genome, provide personalized advice, and even prescribe medication or recommend clinical trials. In the legal field, you can hire agents to negotiate contracts on your behalf, even litigate and settle for you. In mathematics and science, we see agents solving Erdős problems or discovering new superconductors. In the consumer space, personal agents can manage your inbox, calendar, finances, and help you file taxes.

I believe the rapid and large-scale deployment of agents is due to the clarity of economics. Sonya presented a compelling comparison: humans are difficult to scale, while agents can scale infinitely with computation; humans are hard to keep happy (she joked that except for herself, she is always happy), while agents have low maintenance; humans are expensive, you pay them salaries, while you pay agents with tokens, and usually, the cost of completing tasks with tokens is lower than equivalent salary costs. Today, humans are often smarter, but the bitter lesson continues to advance, and soon agents will be smarter than humans in many tasks.

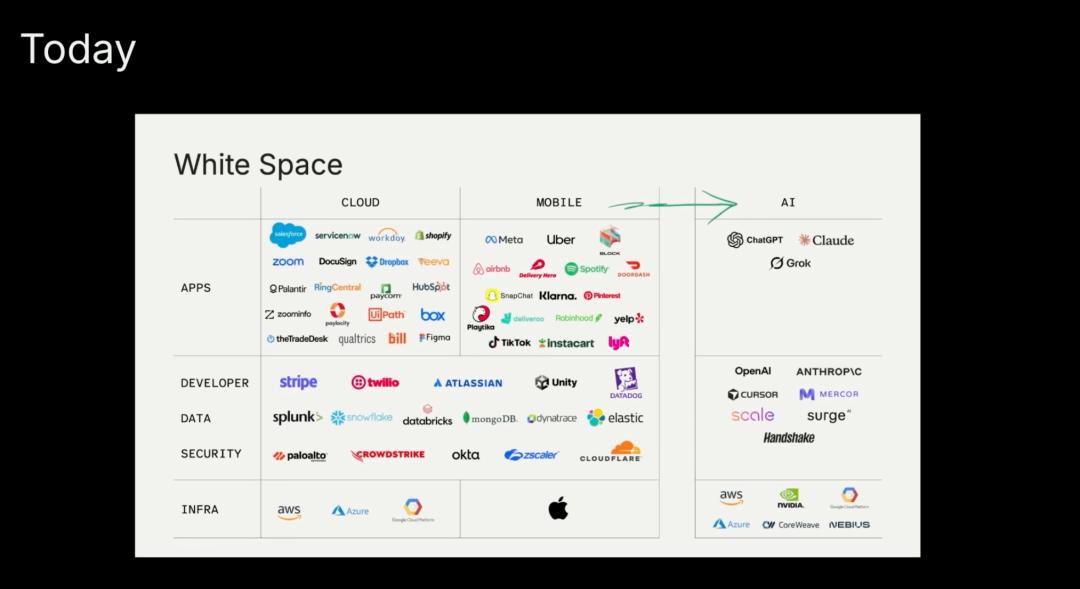

The second characteristic is that this is the fastest wave. We can all feel this. Pat showed a slide where the blank space on the AI side is being filled very quickly. These logos are companies that have reached over $1 billion in revenue due to transformations driven by cloud computing, mobile internet, and now AI. At the current speed, more companies are on the way. This speed means we do not have much time to adapt slowly; we must act quickly. But Pat also reminded us of an important fact: no lead is safe. He used a racing metaphor: “You cannot overtake 15 cars in the sunshine, but you can overtake 15 cars in the rain.” Now foundation models are pouring out new capabilities like heavy rain, which means no lead is safe, but it also means anyone can win.

My understanding of this point is that in a stable technological environment (sunny days), first-mover advantage is crucial, and latecomers find it hard to catch up. But in a rapidly changing technological environment (rainy days), everything becomes uncertain, and new opportunities constantly emerge. Today’s leaders may fall behind tomorrow because new capabilities change the rules of the game. This presents both challenges and opportunities for entrepreneurs. The challenge is that you must continuously adapt and evolve, while the opportunity is that you always have a chance to surpass competitors as long as you can better leverage new capabilities.

The third characteristic I mentioned earlier is that this is a revolution in computation rather than communication. Pat particularly emphasized the importance of this point. The past revolutions of the internet, cloud computing, and mobile internet were about how information is distributed; they were communication revolutions. These revolutions are characterized by relatively stable infrastructure, allowing you to build applications on a relatively stable platform. But AI is different; AI is about how information is processed; it is a revolution in computation. This means the ground beneath your feet is always shifting, and the technological foundation you build upon changes daily.

Pat stated that in his generation’s career, they have only experienced communication revolutions. This is the first true computation revolution. The implications of this difference are profound. In a communication revolution, you can formulate a five-year plan and execute it. But in a computation revolution, a five-year plan is meaningless because the underlying capabilities may undergo fundamental changes every month. This requires us to adopt completely different strategic thinking—more agile and adaptable.

MAD Strategy Framework for Entrepreneurs

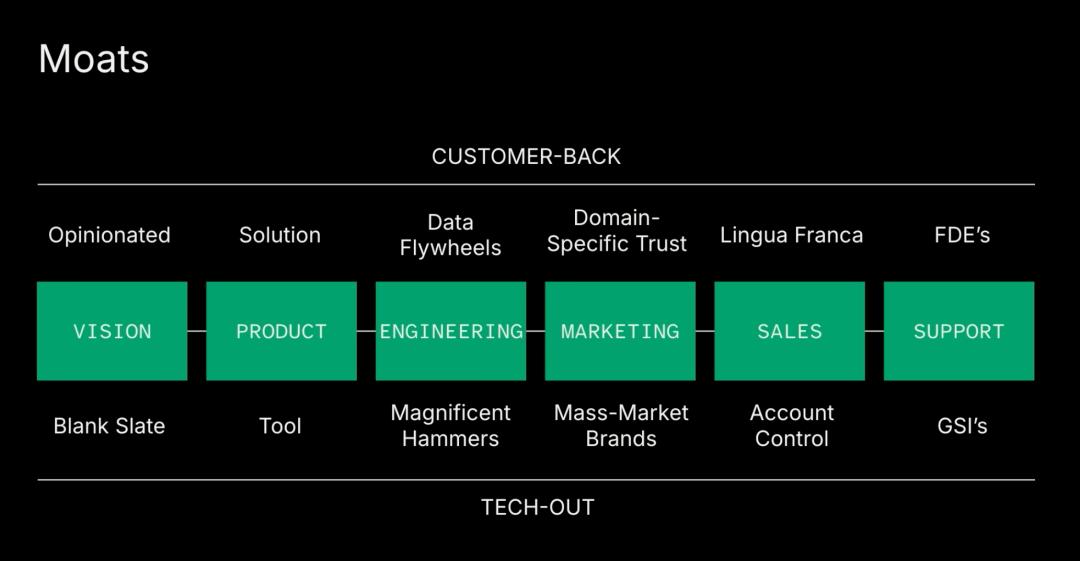

Pat provided a framework for entrepreneurs building applications on top of models, which he called MAD. He jokingly said this is free advice, so it is worth every penny you pay for it. But I find this framework very valuable because it directly points to how to establish lasting competitive advantages in this rapidly changing era. MAD stands for Modes (moats), Affordance, and Diffusion.

Before discussing MAD, Pat first presented a concept called the merchandising cycle, which includes all the links in the value chain from idea to satisfied customers. His core point is that if you look at it from a tech-out perspective, you will handle each link in the value chain in a certain way. But if you look at it from a customer-back perspective, you will handle each link in a completely different way.

Here is an intuitive part that impressed me. In the computation revolution, which is about information processing, you might want to look down at those constantly emerging cool new things. But to build a moat, you actually want to look up because your customers’ changes are not as fast as the speed of capability changes. The product you build may become irrelevant tomorrow, but the depth you establish around customers will be more lasting.

Regarding Modes, Pat emphasized that this does not mean products and technology are unimportant; they are extremely important, and usually, the best products win. But in a world where products change so quickly, because capabilities change so rapidly, when thinking about moats, he encourages us to be as customer-centric as possible, considering all the ways to build around customers. I understand this to mean deeply understanding customers’ workflows, pain points, decision-making processes, and establishing trust, becoming an indispensable part of their business. When technology changes, this customer relationship will give you the opportunity to continue serving them, even with different technologies.

The concept of Affordance that Pat borrowed from the design world is particularly well-chosen. A hammer is an object with affordance. If he gives his two-year-old son a hammer, the son will know what to do—grab it and start banging things. That is why they do not give him a hammer. An object with affordance does not need explanation; people know how to use it.

Pat gave a great example. Claude Code is extremely powerful, but for the average Fortune 500 employee, opening a terminal to see how far they can go is not intuitive. While it is powerful, it does not provide much affordance. This is not a criticism of Anthropic but an opportunity for anyone wanting to build on top of it. Your job is to create the path of least resistance for your specific customers and their specific problems, allowing them to easily find the results their business needs.

My understanding of affordance is that there is a huge gap between technological capabilities and what users can actually use. Even the most powerful tools have no value if users do not know how to use them or if they are too complex to use. The opportunity for application-layer companies is to fill this gap, transforming powerful but complex technology into simple and intuitive user experiences. This requires a deep understanding of users’ mental models, skill levels, and work environments. You are not educating users on how to use complex technology but adapting technology to fit users’ existing workflows.

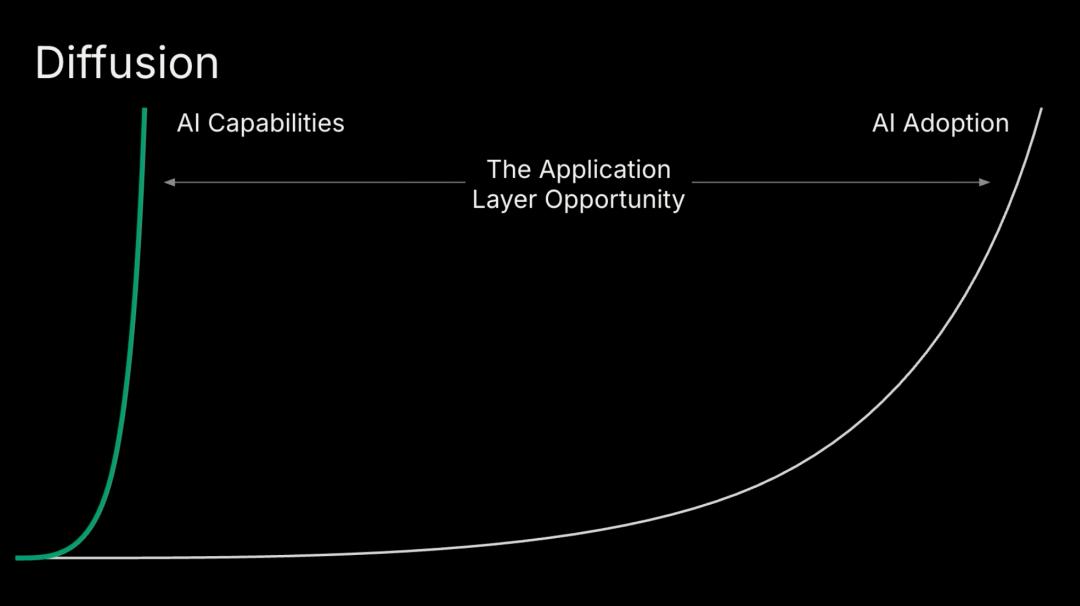

The diffusion gap is the third dimension of opportunity for application-layer companies. Pat pointed out that the speed at which capabilities diffuse into the market lags far behind the speed at which these capabilities are created. Whenever the pace of progress in foundation models exceeds that of your average Fortune 500 company, this gap widens, and the opportunity grows.

My understanding of this point is that innovation always happens first in labs and at the cutting edge of companies, but it takes time for most businesses to adopt these innovations. They need to evaluate, test, integrate, and train. In the AI era, this gap is particularly large because technological advancements are so rapid. Every day, new models and new capabilities are released, but most companies are still trying to figure out how to use technology from six months ago. This gap represents the opportunity for application-layer companies—to help businesses bridge this divide, enabling them to actually use the latest capabilities.

Pat concluded that for moats, think as customer-centric as possible; for affordance, think about creating the path of least resistance for your customers; and that diffusion gap represents your opportunity. These three dimensions combined form a complete framework for establishing lasting competitive advantages in the AI era.

But Pat did not stop there. He also specifically reminded us that while that slide showing the blank space being filled may discourage some, thinking there are no opportunities left, remember: no lead is safe. Now, foundation models are pouring out new capabilities like heavy rain, meaning that companies that seem to dominate the market may have their leading positions overturned overnight. At the same time, this also means anyone can win as long as you can better leverage new capabilities and adapt to changes faster.

I particularly resonate with this viewpoint. In a stable technological environment, first-mover advantage is crucial; network effects and scale advantages create strong barriers. But in a rapidly changing technological environment, these barriers can become irrelevant overnight. New capabilities may render old product architectures obsolete, and new interaction methods may change user habits. This is why Pat said, “Living in this era is fantastic”—for those willing to innovate and act quickly, opportunities are everywhere.

A World Where Agents Are Ubiquitous

Sonya painted a vision of a world where agents are ubiquitous, which I find both exciting and thought-provoking. She mentioned that people are building agents for everything. Some are silly, like an OpenClaw agent that would report your neighbor’s tax evasion to the tax authorities (she said, “Please don’t do this, or maybe do it”). Others are entrepreneurial, with agents running generative media campaigns to sell construction services. There are also professional-level agents; she mentioned a huge internal competition at Sequoia to see who can build the best agents to get work done better.

The speed and scale of agent deployment will be unprecedented because the economic benefits are too clear, and agents have inherent scalability. This does not mean humans will become unemployed; Sonya believes that human adaptability is unique. However, we should indeed expect that the deployment of agents at the application layer will be very rapid and large-scale.

When you put all this together, the number of agents is expanding in an exponential, perhaps super-exponential manner. Sonya believes we are about to reach a point where things become truly strange. What happens when business occurs between agents? Can they pay each other? What happens when agents can actually negotiate transaction terms with each other? Will we have a large group of agents regulating us, preventing cybersecurity issues or large-scale destruction? We only know that the world is becoming strange at an incredibly fast pace.

I feel both excited and somewhat anxious about this future. The excitement comes from it representing a tremendous leap in human productivity. We can finally delegate those repetitive, tedious tasks to AI and focus on more creative and strategic work. But the anxiety arises from the fact that this transition will bring many unknown social and ethical issues. When agents can autonomously trade with each other, how do we regulate them? When agents make erroneous decisions, who is responsible? These are all questions we need to think seriously about.

Sonya concluded by quoting her inner Eliezer Yudkowsky (an AI safety researcher), saying: long horizon agents have arrived, and their development curve is very clear. For entrepreneurs, everyone has examples of completing crazy difficult timelines because of AI. Zed’s Nathan completed a three-year moon landing project solo using Claude Code during the holidays. Brett Taylor rebuilt Sierra over a weekend. The Notion team rewrote 8 million lines of code in just six weeks.

Everyone has these compressed timelines examples, but Sonya believes few outside AGI labs see what happens when you stack these compressed timelines together. This is what is possible now. So whatever you can imagine building in the next 100 years can now be achieved in 100 days, thanks to agents. This perspective deeply shocked me. We are not talking about incremental improvements; we are talking about compressing the time dimension. This means the speed of innovation will grow exponentially.

Cognitive Revolution: The Next Industrial Revolution

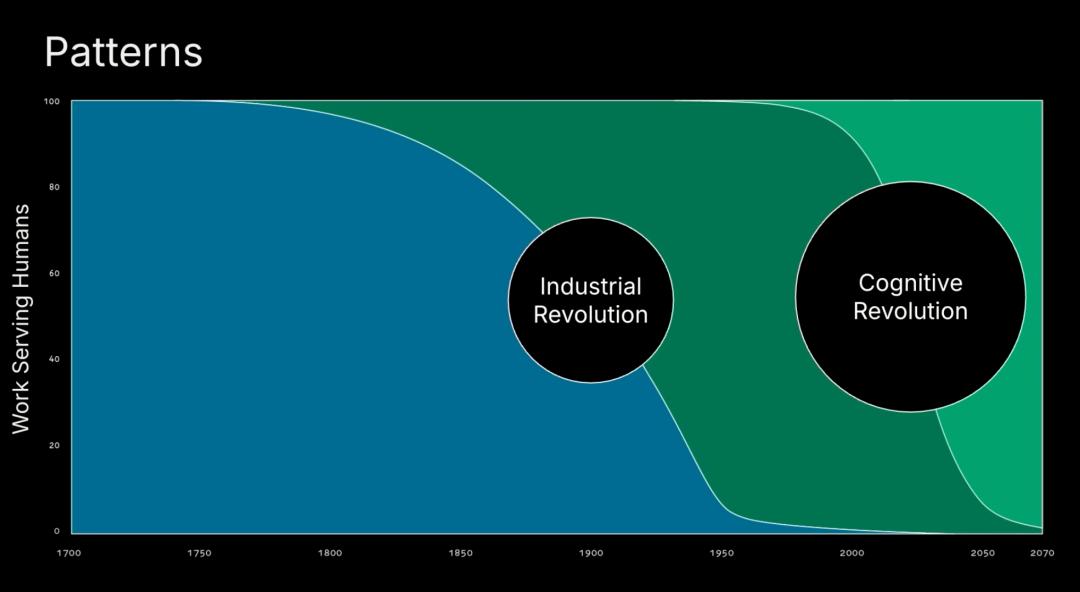

Konstantine Buhler’s part of the speech may have been the most philosophically profound of the entire presentation. He divided work into two types: physical work and cognitive work. Physical work is packages on the Pony Express, satellites on Falcon 9, with power equal to force times distance, involving physical movement. Cognitive work is the theorem proposed by Pythagoras, the solution to the protein folding problem by DeepMind, and conscious thought. These are two very different types of work, but Konstantine believes they will follow very similar revolutionary patterns.

He talked about the revolution of physical work, which is the industrial revolution. For most of human history, nearly all work serving humanity was done by some muscle, either human or animal. Humans moved things or animals pulled humans. This started around 1700 but can be traced back thousands of years. Then things began to change. Water and wind power, steam engines, and then things accelerated. Steam engines, internal combustion engines, electric motors. By 2026, you can estimate that over 99% of all physical work done for humanity on Earth is completed by machines. The airplane that brought you here, the manufacturing of all the goods in this room, and all the setups for the human experiences you are currently experiencing.

Konstantine believes a similar pattern will occur in the cognitive realm, but we are still in an earlier stage. For most of human history, all thinking done on Earth was primarily by humans, with perhaps a bit of contribution from animals, like shepherd dogs chasing sheep. Historically, there has been a small amount of mechanical work, like astrolabes or clocks. In the past few hundred years, until electronic computing emerged, progress was slow. In the last hundred years, think about the trillions of calculations happening at any given moment to serve you as a human. All this cognitive work, the trillions of calculations serving us at any given moment.

Konstantine believes neural networks are the next big wave, and in the near future, 99.9% of cognitive work on Earth will be completed by machines. This parallel is very clear. The good news is that we have experienced such a revolution. The cognitive revolution will be much like the industrial revolution, only larger and faster.

This perspective made me ponder deeply. If cognitive work is indeed taken over by machines like physical work, what will that mean for us humans? Konstantine provided his answer through four short stories.

Four Stories About the Future

The four stories Konstantine told deeply moved me, each revealing an important truth about the AI era. The first story is about aluminum. In the mid-19th century, America wanted to build a grand monument for its first president and greatest war hero, George Washington. They designed the tallest building in the world at the time, the Washington Monument. They wanted to top it with the world’s most precious metal, 100 ounces of the most precious metal. This metal was so precious that they displayed it at Tiffany’s in Manhattan. That metal was aluminum.

In the decades following the completion of the Washington Monument, a young inventor proposed an electrolytic method to separate aluminum from the earth. Within decades, aluminum was used to wrap candy and sandwiches, then thrown in the trash. Aluminum is like intelligence, and the electrolytic method is like artificial intelligence. We are about to enter a world where some of the most precious skills, requiring decades to acquire, can be called upon instantly, to the point where after use, you can crumple them up and throw them in the trash.

This metaphor is incredibly accurate. We are accustomed to viewing certain cognitive abilities as precious and scarce, but AI is making these abilities cheap and abundant. This is not to belittle human intelligence but to illustrate how technological progress redefines value. When expertise becomes as ubiquitous as aluminum, what will truly be valuable?

The second story is about alien design. The world we see today is all designed for humans. It is optimized in a way that makes sense to our brains because we perform almost all cognitive work in the world. When machines perform cognitive work, it will be somewhat different. In 2006, NASA was optimizing antennas for a large space mission. Traditionally, their antennas looked like beautiful geometric symmetrical patterns optimized for surface area under certain power constraints. This time, they said to let the computer handle it using evolutionary algorithms (similar to reinforcement learning). The result was an antenna that significantly improved productivity but was not intuitive for human thinking.

In this AI era, when we hand cognitive tasks to machines, we will get results that are somewhat unintuitive for us. When AI designs chips, cars, and buildings, they may look very different. We must keep an open mind about the world we are entering because AI will not think like us. It will have alien designs.

This story reminds me not to judge AI outputs with human intuition. AI may find solutions we could never think of, which may seem strange or inelegant but could be more effective. We need to learn to appreciate this “alien aesthetics.”

The third story is about emerging sciences. In the early days of the industrial revolution, great engineers like Newcomen and Watt perfected the internal combustion engine. Essentially, they put petrochemical substances into a piston, ignited them, and millions or billions of particles exploded to drive the piston. For nearly a hundred years, all this was trial and error. Engineers would say, “Ah, this works a bit better.” Perhaps you can see something like scaling laws, but it was all engineers playing with products, seeing how to improve a little.

More than 120 years later, Sadi Carnot appeared and formalized everything in a new science: thermodynamics. He said, “Wait a minute, there are millions or billions of particles; we can actually formalize what this looks like.” In this case, there are billions of neurons and trillions of tokens. Now, we are in the trial and error phase of AI. Even if we think this is an understood science, it is not. In the future, we will introduce a foundational science like thermodynamics over the next few decades. Someone in this room may propose this science. This science will be taught in high school; it will be so fundamental. It will help us master AI and even help us understand consciousness.

This perspective made me realize that our understanding of AI is still very superficial. Much of what we do now is empirical, like early steam engine engineers. But someday, someone will propose a complete theoretical framework to explain how AI works, and that will be a revolutionary moment.

The fourth story is about the irrationality of art. For most of human history, for thousands of years, art has progressed towards realism. From cave paintings 25,000 years ago, Egyptian hieroglyphs, Greek pottery, to the grand shift towards realistic art in Renaissance paintings. Look at the differences. After thousands of years, humanity has triumphed. Then engineering came along, the daguerreotype, early photography, and suddenly, the skill of perfecting every brushstroke over decades of life disappeared.

How did the world react? People thought painting was over. Oh, just like that, machines can do it better than any human, and art is finished. So what happened? How did humans respond? Humans responded by asking whether the purpose of this art was to capture the moment seen by the eye or the moment seen by the mind and soul. Impressionism, expressionism, cubism, neo-expressionism. All these new art forms are humanity’s response to this massive change in science.

2,500 years ago, the Greek philosopher Protagoras wrote: “Man is the measure of all things.” He meant that in a vacuum, nothing has value to humans. Not aluminum, not art, not intelligence. It only has value because of experience. AI can do work; AI will do work. But only human connection can give work meaning. That is why we are all in this room today. Ten years from now, work will be very different, and things will change a lot. But one thing will remain constant: the relationships you build with those around you today will endure. That is what you will look back on, and that is what is valuable today.

My Deep Thoughts on This Revolution

After listening to the entire presentation, I have several profound insights.

First, we are indeed at a historic turning point. Sequoia’s claim that “this is AGI” is not hype but a pragmatic judgment based on actual capabilities. When agents can recover from failure and persist until a task is completed, this is sufficient from a business perspective. We do not need to wait for superintelligence from science fiction; we already have tools that can change the game.

Second, speed is the most notable characteristic of this revolution. Work that used to take 100 years can now be completed in 100 days; this is not an exaggeration. I see more and more examples around me of individuals using AI to accomplish tasks that previously required a team months to complete. This time compression will produce a compound effect, and the speed of innovation will grow exponentially. This means we must act quickly because the window of opportunity is very short.

Third, being customer-centric is more important than ever. In a rapidly changing technological era, the only anchor point is customer demand. Technological capabilities change daily, but the problems customers want to solve remain relatively stable. Companies that can deeply understand customers and build solutions around them will establish true moats.

Fourth, we need to prepare for a world where agents are ubiquitous. This is not science fiction but an imminent reality. As the number of agents grows exponentially, all aspects of society, economy, and law will need to adapt. We need to establish new frameworks to manage interactions between agents and ensure their behavior aligns with human values.

Fifth, and most importantly, amidst all technological changes, human connection remains core. AI can make us more efficient, but it cannot replace the relationships and emotional connections between people. In a world where cognitive work is taken over by machines, what will truly be valuable are those unique human qualities: creativity, empathy, curiosity, and adaptability.

I believe we are witnessing history. The cognitive revolution will profoundly change the world, just as the industrial revolution did, only it will be larger and faster. This is both exciting and awe-inspiring. We have a responsibility to ensure that this revolution benefits all of humanity, not just a privileged few. This requires the collective effort of all of us, involving tech experts, policymakers, entrepreneurs, and ordinary citizens.

Sequoia’s presentation inspired me greatly but also raised more questions. Are we ready to embrace this future? Can our education systems, legal frameworks, and social structures keep pace with the speed of these changes? How do we ensure that in the pursuit of efficiency, we do not lose our humanity? These are all questions we need to think and discuss seriously.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.